resources

Framework links and dedicated resource pages for NativQA datasets and related work.

The resources below collect the official framework links and dedicated pages for datasets and related work.

Resource pages

Dataset

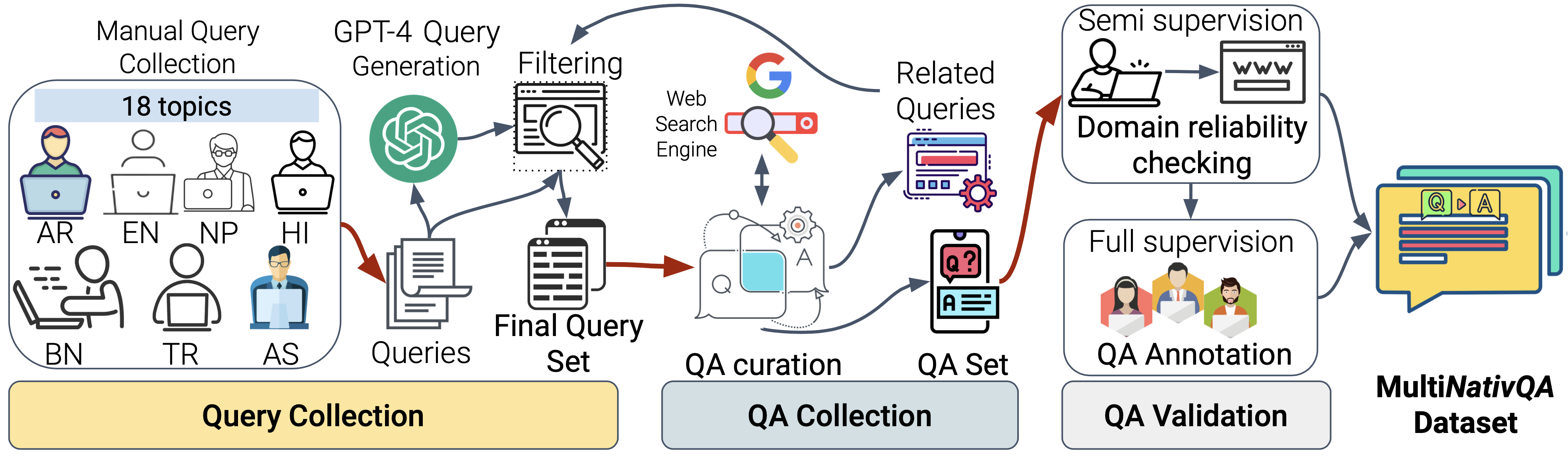

MultiNativQA Dataset

Explore dataset links, live download metrics, language coverage, regional scope, and topic distribution for the multilingual natural QA benchmark.

Spoken visual QA

EverydayMMQA and OASIS

Explore the multilingual and multimodal framework for culturally grounded spoken visual QA, with the paper summary, pipeline, dataset scale, and benchmark findings in one place.

AudioLLM resource

MENASpeechBank

Explore the Arabic and MENA speech resource with a reference voice bank, persona-conditioned multi-turn conversations, and live Hugging Face download metrics.

NativQA Framework

Quick install pip install nativqa-framework

The NativQA Framework provides the collection and processing pipeline used to build culturally aligned QA resources from native-speaker and region-aware queries.

It supports multilingual dataset construction workflows that can feed both benchmarking and fine-tuning, and it also serves as the foundation for the public resource pages linked above.