EverydayMMQA

EverydayMMQA and OASIS resource page for culturally grounded spoken visual QA.

Spoken visual QA

EVERYDAYMMQA: A Multilingual and Multimodal Framework for Culturally Grounded Spoken Visual QA

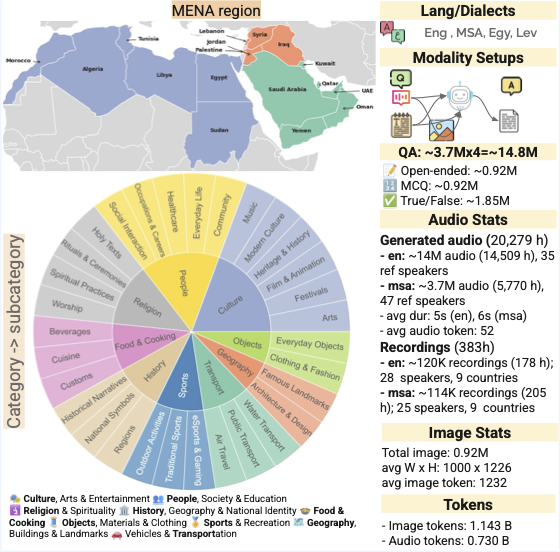

EverydayMMQA is a framework for building culturally grounded spoken visual QA resources. The paper introduces OASIS, a large-scale multimodal benchmark and training resource spanning English and Arabic varieties across 18 Arab countries.

Availability Public framework and dataset links will be added here once the release is public.

Background

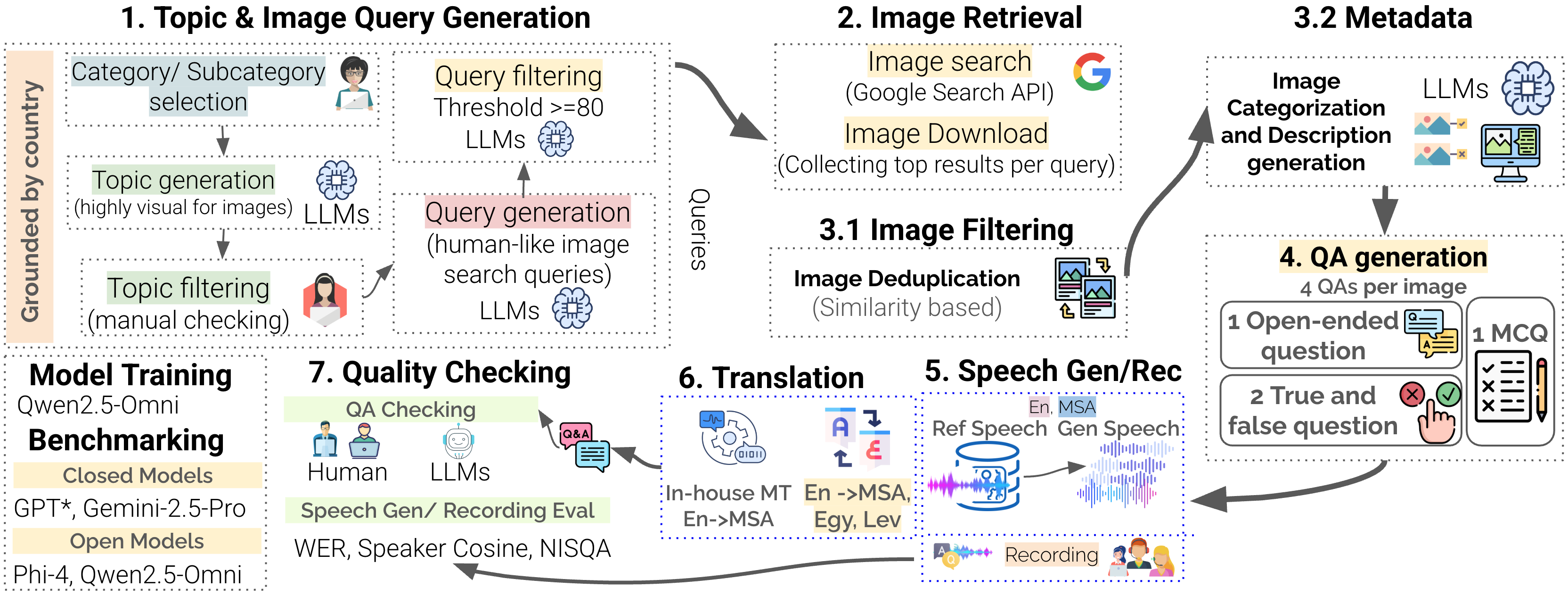

EverydayMMQA targets a gap in multimodal evaluation: many current models perform well on standard visual question answering but still miss culturally grounded, everyday knowledge, especially in underrepresented languages. The framework organizes the full data creation pipeline, from culturally grounded topic and query generation through country-localized image retrieval, filtering, QA generation, speech generation, translation, and quality checking. Using this pipeline, the paper develops OASIS as a benchmark and training resource for spoken visual QA.

OASIS at a glance

images in the final resource

QA pairs across language varieties

spoken questions

Arab countries covered

language varieties

input settings

top-level categories

subcategories

The paper also reports roughly 20K hours of generated speech for full coverage and 141 hours of human recordings for benchmark subsets.

Framework pipeline

The framework is designed as an end-to-end pipeline for culturally grounded spoken visual QA. It combines query generation, locale-aware image retrieval, filtering, multilingual QA generation, speech generation and recording, translation, and quality control into one reusable process.

- Culturally grounded topic and query generation with model-assisted filtering.

- Country-localized image retrieval using locale settings and license constraints.

- Image deduplication, filtering, categorization, and metadata generation.

- Open-ended, multiple-choice, and true-false QA generation per image.

- Speech generation, human recording, translation, and final quality checks.

Dataset analysis

OASIS integrates speech, images, and text to support culturally grounded evaluation beyond simple object recognition. The resource spans English, Modern Standard Arabic, Egyptian Arabic, and Levantine Arabic across a balanced set of country-specific contexts.

Each image is paired with four QA instances: one open-ended question, one multiple-choice question, and two true-false questions. The benchmark supports four main input settings for evaluation.

Benchmarking

The paper evaluates a mix of closed and open multimodal models, including GPT-4.1, GPT-4o-audio, GPT-5, Gemini 2.5 Pro, Qwen2.5 Omni variants, Phi-4, and a fine-tuned Qwen2.5-3B-Omni model. The reported findings are consistent: visual grounding matters most, and smaller models improve substantially when the training signal aligns speech, text, and images.

Images shift the bottleneck

Adding the image produces large gains across models and moves the remaining challenge from recognition toward faithful answer generation.

Grounding narrows gaps

Visual grounding reduces cross-lingual and dialect gaps, especially for Arabic varieties that are harder in text-only settings.

Speech benefits most

Images act as a modality equalizer by recovering much of the performance lost to speech and transcript noise.

Fine-tuning helps compact models

Light multimodal fine-tuning makes smaller systems materially more stable and competitive, particularly on audio-linked inputs.

Citation

@article{alam2025everydaymmqa,

title={EverydayMMQA: A Multilingual and Multimodal Framework for Culturally Grounded Spoken Visual QA},

author={Alam, Firoj and Shahroor, Ali Ezzat and Hasan, Md. Arid and Ali, Zien Sheikh and Bhatti, Hunzalah Hassan and Kmainasi, Mohamed Bayan and Chowdhury, Shammur Absar and Mousi, Basel and Dalvi, Fahim and Durrani, Nadir and Milic-Frayling, Natasa},

journal={arXiv preprint arXiv:2510.06371},

year={2025}

}