MultiNativQA

MultiNativQA dataset resource page for multilingual culturally aligned natural question answering.

Dataset resource

MultiNativQA: multilingual culturally aligned natural QA benchmark

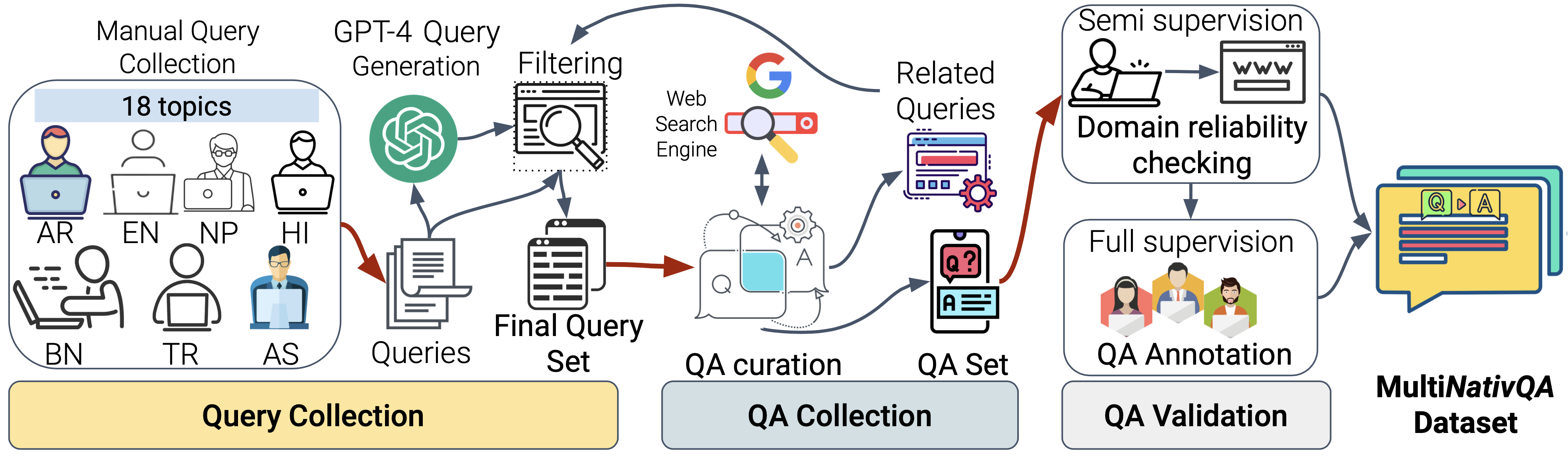

MultiNativQA is a multilingual benchmark built with native-speaker queries and local context for more realistic evaluation of large language models. It supports both benchmarking and fine-tuning across languages with different resource levels.

Built with NativQA Framework

Overview

MultiNativQA is designed to evaluate large language models with queries that reflect how native speakers ask questions in their own languages and regions. Instead of relying on generic or synthetic prompts, the benchmark emphasizes cultural alignment, local knowledge, and realistic information needs across diverse linguistic settings.

Dataset at a glance

Hugging Face downloads (all-time)

Hugging Face likes

dataset configs

license

manually annotated QA pairs

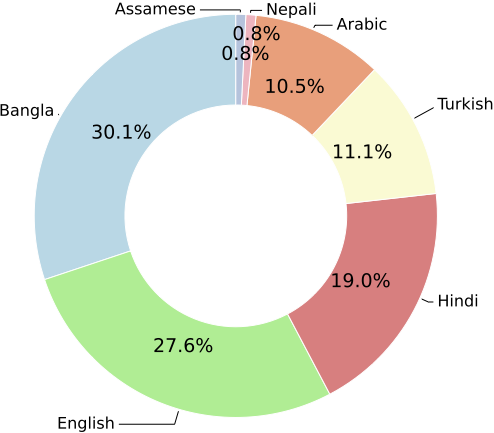

languages represented

regions covered

seed topics for query collection

Coverage at a glance

MultiNativQA spans native-speaker queries collected across nine regions and covers both everyday and specialized topics to better stress-test multilingual model behavior in realistic settings.

Why this benchmark matters

Native-speaker grounded

Queries come from native speakers, which makes the benchmark closer to real local information needs than template-heavy alternatives.

Region-aware evaluation

The dataset emphasizes cultural and regional variation that multilingual models often miss when evaluation sets are too generic.

Evaluation and tuning ready

The benchmark supports both model evaluation and downstream fine-tuning workflows built on culturally aligned QA data.

Citation

@inproceedings{hasan-etal-2025-nativqa,

title = "{NativQA:} Multilingual Culturally-Aligned Natural Query for {LLM}s",

author = "Hasan, Md. Arid and Hasanain, Maram and Ahmad, Fatema and Laskar, Sahinur Rahman and Upadhyay, Sunaya and Sukhadia, Vrunda N and Kutlu, Mucahid and Chowdhury, Shammur Absar and Alam, Firoj",

booktitle = "Findings of the Association for Computational Linguistics: ACL 2025",

year = "2025",

address = "Vienna, Austria",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2025.findings-acl.770/",

doi = "10.18653/v1/2025.findings-acl.770",

pages = "14886--14909"

}